Artificial intelligence won’t just make change management faster — it will force us to rethink what change management actually is.

For decades, change management has followed a familiar rhythm. Leaders identify a transformation need, bring in change leads, run a diagnosis, design a program and then mobilize the team or organization through a cascade of communications, training events and steering committees. It is largely sequential, manual and the same, regardless of whether you are changing a payroll system or integrating a new growth model.

AI may already be making that rhythm feel very old-fashioned.

This is not simply a question of automating existing tasks like using a chatbot to draft a change communication or a dashboard to track adoption rates. The deeper shift is structural. AI makes it possible to sense, analyze, personalize and adapt change in near real time, at a scale and granularity that no team of humans could previously manage. That changes what change management is, not just how it is done.

From project to organism

The dominant mental model for change has always been the project: a bounded effort with a start, a middle and an end. Even agile change approaches tend to preserve this frame because they iterate, but they still conclude.

AI enables a fundamentally different model: the organization as a continuously sensing, adapting system. (Perhaps similar in concept to the core idea in the recently published Octopus Organization.) Rather than deploying a feedback survey every twelve months and calling it “pulse checking,” leaders will have access to persistent, real-time intelligence about how an organization is absorbing change. They could see where energy is building, where resistance is forming and where drift is setting in before it becomes visible.

Network analysis of communication patterns can surface the informal influencers who actually shape sentiment on the ground, not (just) the people with “Change Champion” in their email signature, but the ones their peers actually turn to. Predictive modelling can identify, weeks in advance, which teams are likely to stall on a particular transition. Sentiment analysis across multiple channels can give change leaders a live picture of psychological safety across the organization.

“The question shifts from ‘how do we roll out this change?’ to ‘how do we create an organization that is genuinely good at changing?’”

None of this replaces the need for human judgment — quite the opposite. It gives leaders and practitioners far richer information to work with. But it also means that the old excuse of, “we didn’t know morale was that low until the survey came back six months later,” no longer holds.

Personalization at scale

One of the chronic failures of change programs is that they communicate to the average employee who does not actually exist. The same all-staff email lands in the inbox of a 22-year-old graduate in digital product and a 54-year-old operations specialist who has survived nine restructures. Neither finds it particularly relevant. Both disengage.

AI makes genuine personalization viable. It enables different messages, learning pathways, timings and formats that are calibrated to role, readiness level, prior experience and even communication preferences. This is not manipulation; it is the difference between shouting at a crowd and actually talking to people.

At its best, this shifts the experience of change from something that happens to people towards something that acknowledges their individual context and meets them where they are. At its worst (and the risk is real), it becomes a sophisticated nudging machine that creates the appearance of consent without the substance of it. The line between personalized communication and behavioral engineering is one that every organization will need to draw deliberately.

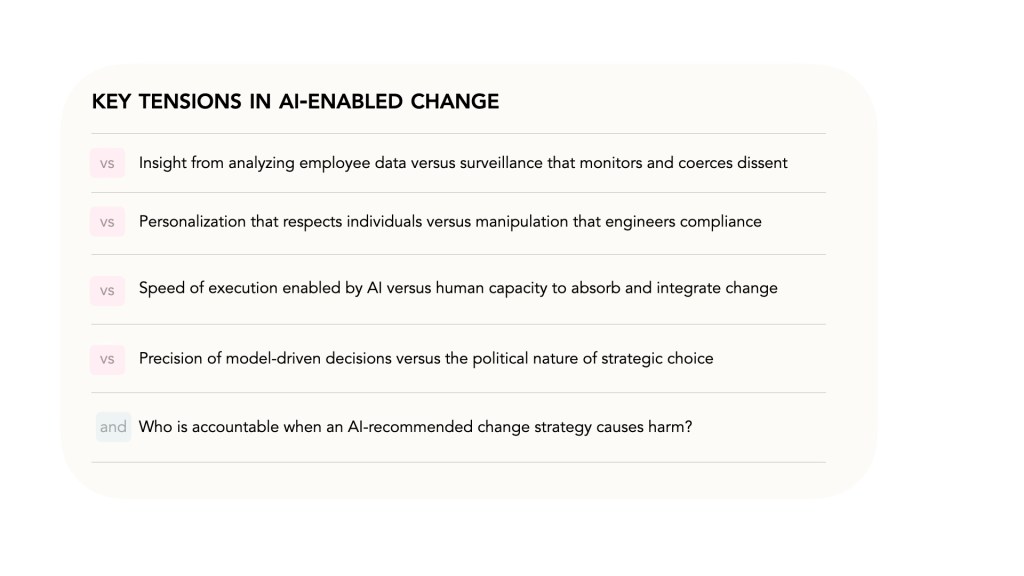

The ethical tensions leaders must navigate

These are not hypothetical concerns. Organizations that race to deploy AI capabilities in change management without resolving these questions will not just face reputational risk — they will undermine the very trust that makes change possible in the first place.

The paradox of speed

AI will make it possible to plan and execute change significantly faster than before. Strategy cycles that once took months can compress to weeks. Readiness assessments that required survey design, fieldwork, and analysis can be generated in days. This is genuinely valuable.

But human beings do not absorb change faster because the technology enabling it has improved. Cognitive load, emotional processing, identity adjustment, and cultural shift operate on deeply human timescales that no model can accelerate. The risk is that AI-enabled organizations create a chronic condition of change fatigue where they are moving faster than their people can follow, generating the very resistance and disengagement they were trying to prevent. Anyone who has parented adolescents knows how challenging it can be when intellectual understanding and emotional readiness aren’t aligned.

The discipline for leaders is not simply to ask “how fast can we move?” but “how fast should we move, given the human system we are asking to change?”

What this means for the change profession

AI will absorb much of the analytical, templating and program coordination work that currently occupies a significant portion of change practitioners’ time. This will feel threatening to some of us. It should also feel liberating.

The work that AI genuinely cannot do — facilitating difficult conversations, reading a room, building psychological safety in a leadership team, navigating the political dynamics of a resistant leader — is precisely the work where skilled change professionals create the most value. The profession will need to lean into that relational, judgement-intensive work and resist the temptation to position AI as simply a productivity tool that lets them do more of the same.

There is also a real risk of deskilling. Practitioners who rely on AI scaffolding without developing underlying capability will become brittle, unable to exercise judgment when the model is wrong, or when the situation is genuinely novel. Investing in human capability alongside AI capability is not a nice-to-have; it is a resilience requirement.

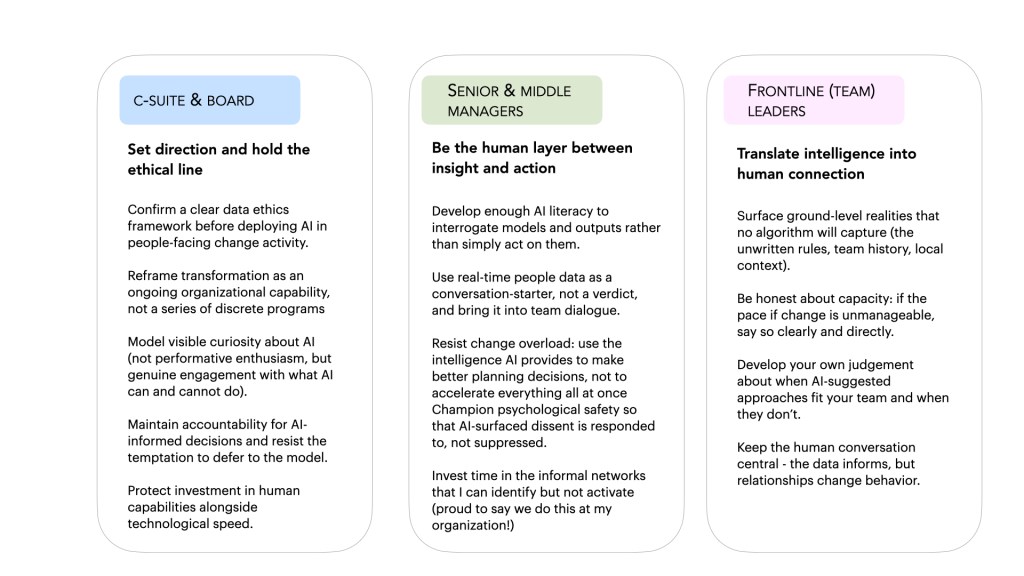

Enabling the shift: what different leaders need to do

Making this transition well is not purely a technology question. It requires deliberate action at every level of the organization. The roles are distinct, and so are the responsibilities.

For Change & HR Professionals

We are both the primary beneficiaries and the primary stewards of this shift. The opportunity is to move from program delivery to capability architecture: helping the organization build the systems, skills and habits that make continuous adaptation possible. That means getting close to the AI tools being deployed, contributing to their ethical design and developing a clear point of view about where human judgment is non-negotiable. It also means advocating for the slow work: the trust-building, listening and honest conversation that no tool replaces.

The organizations that navigate this transition well will not be those that automate fastest. They will be those that use AI to understand their people more deeply and then have the wisdom to act on that understanding with care, honesty and appropriate humility about what the model does not know.

Leave a comment