Why the advocate model isn’t enough — and what to do instead

In 2022, a global financial services firm launched an enterprise AI program. They followed the established playbook and trained 200 change champions, built a community of super-users, cascaded communications and measured adoption through completion rates. Twelve months later, adoption metrics looked strong. But when leaders asked which AI use cases were actually generating value, the answer was far less clear.

The champions had done their jobs. They’d distributed messages, supported training and encouraged logins. What they hadn’t done — because the model didn’t ask them to — was surface the creative, messy, high-value experimentation happening quietly across teams. A procurement analyst had developed a prompting approach that cut vendor research time by 40%. A communications team had built a drafting workflow that their counterparts in three other regions would have loved. Nobody connected the dots.

This sort of gap is not unique to any one organization or scenario. It reflects a structural mismatch between how most organizations have built their change champion models and what AI transformation really demands.

Why AI Changes the Equation

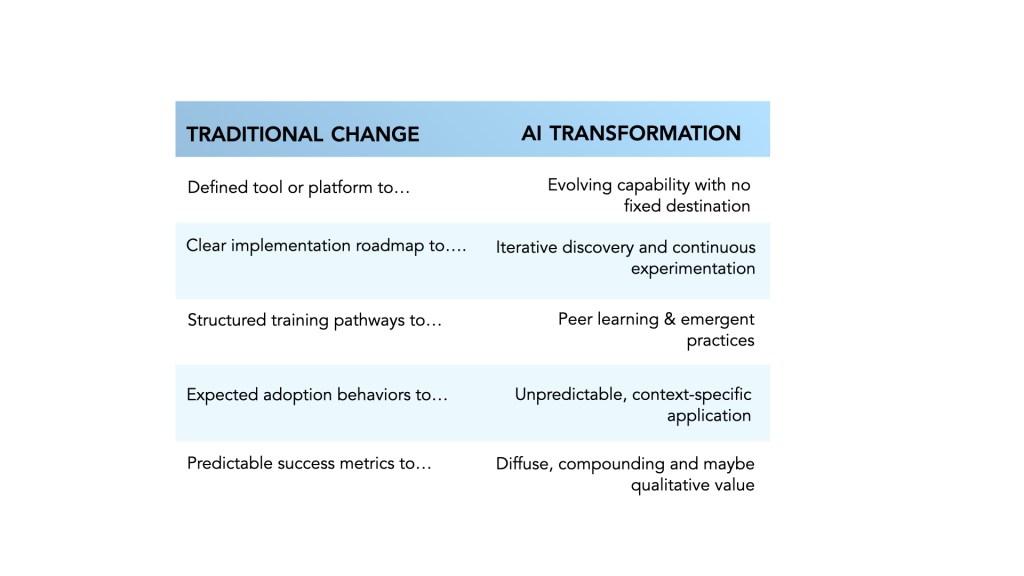

Traditional change champion networks were built for a different kind of change. They were designed around a relatively stable future state — a defined tool, a clear implementation roadmap and predictable success metrics. The champion’s job was essentially logistical: accelerate awareness, support training and smooth adoption.

AI transformation rarely follows this pattern. The comparison below illustrates the fundamental shift:

What this means in practice is that value often emerges through experimentation rather than predefined use cases. Many of the most impactful AI applications are discovered by individuals solving practical problems in their day-to-day work:

- A marketer who finds a prompting technique that transforms how she synthesizes competitive intelligence

- A product leader who cuts customer feedback analysis from three days to three hours

- An operations partner who builds a documentation workflow so effective that his entire function adopts it within weeks

None of these individuals were designated champions. None were waiting for formal direction. And in organizations where the change model is built primarily for message distribution, their insights often go unshared.

From Advocates to Explorers

The most effective change champions in an AI environment are not simply advocates for adoption. They are facilitators of discovery. This is not a cosmetic rebranding — it represents a genuine shift in what the role is for and how it creates value.

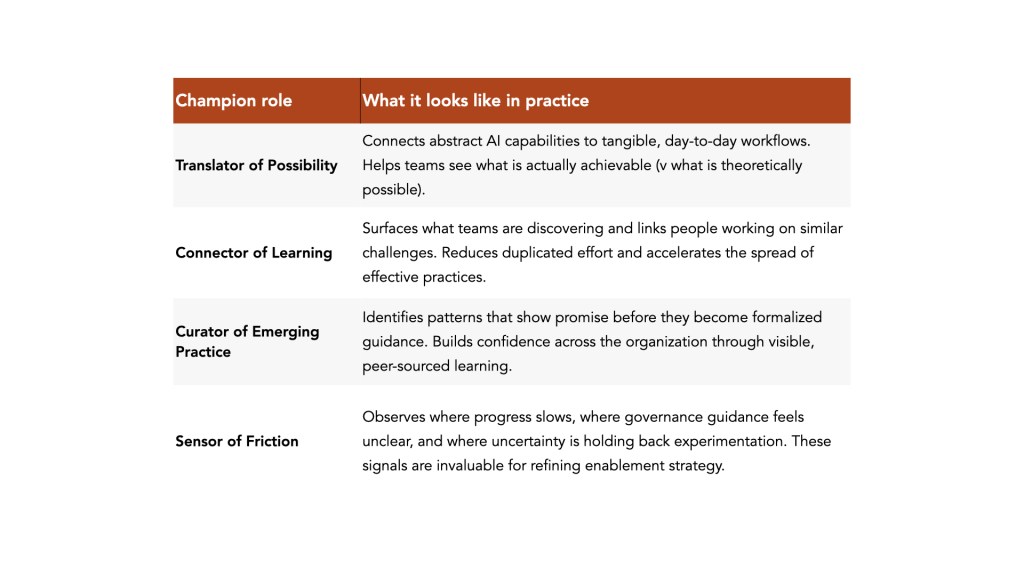

Consider the four dimensions of an expanded champion role:

These roles are not sequential. Effective champions move between them fluidly, depending on where their teams are in the learning journey.

The Risk of Underestimating Grassroots Energy

One of the defining characteristics of AI transformation is that innovation is distributed. No central team can anticipate every valuable use case. No single training program can cover the diversity of real workflows across functions, seniority levels, and geographies.

Organizations that recognize this tend to see measurable differences in outcomes. When grassroots experimentation is genuinely supported — not just permitted in principle — they report faster discovery of high-value use cases, stronger cross-functional engagement, and more durable confidence in applying AI to real work.

When experimentation is constrained or fragmented, two types of failure emerge:

- Employees experiment quietly but don’t share what they learn, fearing it isn’t “official” enough or not wanting to expose imperfect work

- Teams wait for formal direction before engaging, treating uncertainty as a reason to pause rather than explore

Both scenarios limit value creation and both are, to a significant degree, a function of how the change model is designed.

It’s also worth naming a real tension here: distributed experimentation creates governance risks. When individuals are discovering and sharing AI applications informally, there is a genuine risk of inconsistent practices, unvetted outputs, and use cases that cross ethical or regulatory lines. This is not an argument against grassroots energy but one for structuring champion networks so that they connect experimentation to governance, not bypass it.

How Strategic Change Leaders Can Evolve Champion Models

The following shifts are not about replacing what has worked. They are about extending the model to capture value that most organizations are currently leaving unrealized.

Expand the Definition of Contribution

Most champion recognition frameworks reward advocacy behaviors: attending training, hosting launch events, responding to surveys. Broaden what counts as a contribution to include:

- Surfacing a practical use case and documenting it for others

- Facilitating a peer learning conversation (even informally)

- Identifying a point of friction and raising it with enablement leads

- Connecting two colleagues who are working on similar challenges

When contribution is defined this broadly, far more people can participate meaningfully and the network starts to function as an intelligence system, not just a distribution channel.

Create Structured Space for Experimentation Conversations

Informal learning happens regardless of whether organizations create space for it. The question is whether it stays informal. Consider building in regular, lightweight touchpoints (e.g. peer learning sessions, async channels or short champion calls) specifically structured around:

- What are you testing? What prompted it?

- Where is AI reducing effort or improving quality? Where is it falling short?

- What guidance would help you or your team experiment more confidently?

- What patterns are you seeing that others should know about?

These questions often surface insights that formal reporting structures miss and they build the habit of sharing, which compounds over time.

Connect Champions to Governance, Not Just Communications

Change champions are often positioned primarily in relation to the communications and training functions. In an AI environment, the most valuable connective tissue may be between champions and governance structures. When champions understand responsible use boundaries, they can help their teams experiment more confidently — and flag concerns earlier. This requires:

- Giving champions access to governance guidance, not just adoption messaging

- Creating a clear escalation path for champions who surface ambiguous use cases

- Treating governance questions from champions as signal, not noise

Recognize Exploration as Value Creation

Many early AI use cases produce incremental improvements such as a task that takes 20% less time, a document that requires two fewer rounds of revision, a decision that is made with better information. These gains rarely appear in formal transformation metrics. But they compound.

Recognition doesn’t require a formal program. It can be as simple as leaders asking champions to share one thing they’ve discovered in a team meeting, or a brief spotlight in an internal newsletter. It sends the signal that exploration is valued at least as much as compliance and it has an outsized effect on participation.

Address Champion Burnout Proactively

Expanding the champion role is only sustainable if the load is manageable. When the role is purely additive — new responsibilities layered onto existing jobs without support or recognition — champions disengage. The most effective programs treat champion time as a real investment:

- Explicit allocation of time for champion activities, not just implicit permission

- Regular check-ins on what champions need, not just what they’re delivering

- Clear boundaries on what the champion role is not responsible for

The Opportunity Ahead

AI transformation is not a one-time change initiative with a defined end state. It is a capability shift that will continue to evolve for years. The organizations that build durable AI capability — (not just initial adoption) are those that treat learning as a continuous, distributed process rather than a program to be managed centrally.

Change champions are uniquely positioned to be the connective tissue of that learning ecosystem. But only if the model asks them to be.

When champions help translate possibility into practice, experimentation becomes more purposeful. When they help connect learners to each other and to governance guidance, both speed and safety improve. When their contributions are recognized, participation grows. And when participation grows, the organization’s collective intelligence about AI accelerates in ways that no central team can replicate alone.

Key questions for change leaders

Is your current champion model designed to distribute messages, or to surface intelligence? Do your champions have a clear path to connect what they discover with governance teams? How are you recognizing experimentation, not just adoption?

And what would it take to give your champions 10% of their time back — to actually use it?

Leave a comment